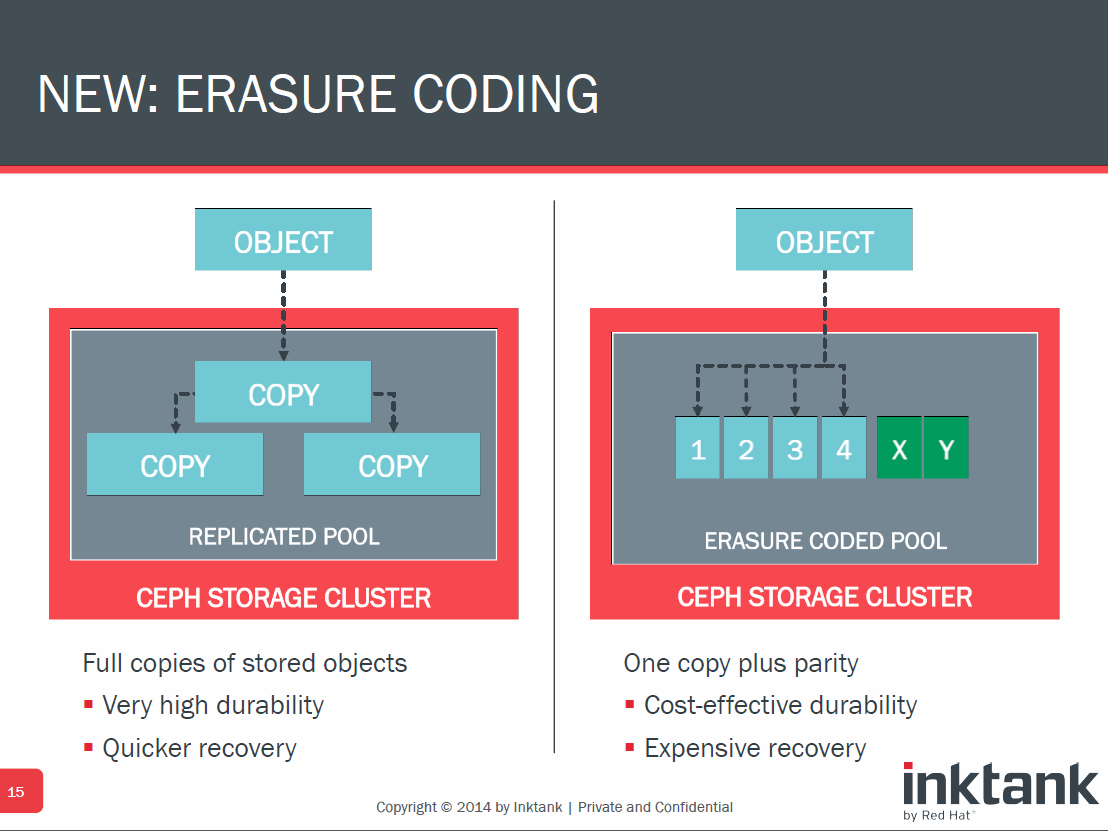

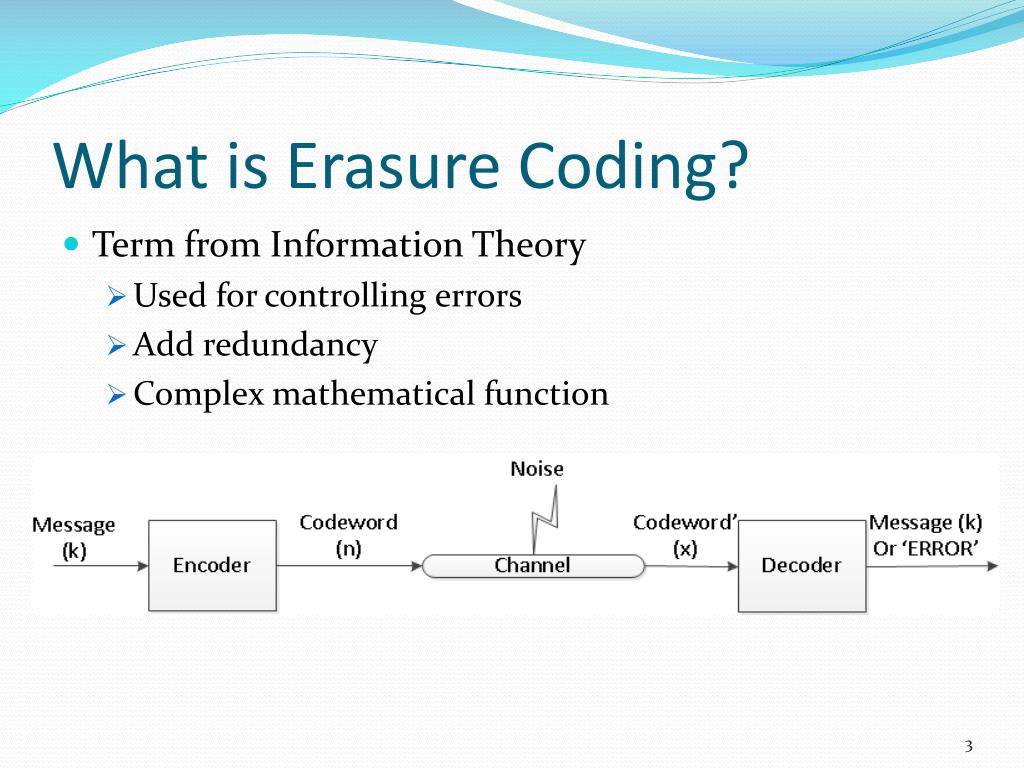

Does there need to be a third header to build a consensus? Sure this protects against data loss within a chunk, but what if that corruption occurs in the headers? Would two lost bytes (one in the starting header and one in the ending header mean the whole chunk is lost? How robustly does it try to reconstruct the header from the two parts? Duplicacy could try to mix and match the three pieces of the header to satisfy the checksum. Thinking about this more though, I have more questions about the parity being within the chunk. It would increase the amount of space consumed, but that’s what parity does. Cross-chunk parity would basically just be more data that hangs around a bit longer before it’s pruned. Yeah, the cross-chunk parity was a concern of mine as well, though it’s not too different from the current case where a chunk can’t be pruned because it contains a few bytes of referenced data. However, I do think Duplicacy needs extra checks to make sure at least the size of each uploaded chunk is correct, and there aren’t situations where we have 0 byte files due to lack of disk space and similar such failures. The regular check command is inexpensive enough that it can detect such situations, and you can fix a storage in good time. If chunks go missing later, that most definitely is an underlying storage/filesystem issue, which I don’t think parity should solve. In fact, with the proper logic, Duplicacy should only upload a snapshot file when all the chunks have been uploaded. I personally don’t think missing chunks should, under normal circumstances, be a common failure mode. I did wonder myself whether this would be a concern, but the implementation complexity of adding cross-chunk parity would be a nightmare to deal with… RAID, or Redundant Array of Independent Disks, is a familiar concept to most IT professionals.Actually, unless I’m misunderstanding the way you’ve diagrammed things here, it looks like the current scheme also only protects against corruption within a chunk and not for a missing chunk. It’s a way to spread data over a set of drives to prevent the loss of a drive causing permanent loss of data. RAID falls into two categories: Either a complete mirror image of the data is kept on a second drive or parity blocks are added to the data so that failed blocks can be recovered. However, RAID comes with its own set of issues, which the industry has worked to overcome by developing new techniques, including erasure coding. Organizations need to consider pros and cons of the various data protection approaches when designing their storage systems.įirst, let's look at the challenges that come with RAID. Both processes described above increase the amount of storage used. Mirroring obviously doubles data size, while parity typically adds one-fifth more data, though it is dependent on how many drives are in a set. Writing an updated block involves two drive write operations, and parity may require blocks from all the drives in a set to be read. When a drive fails, things start to get a bit rough. Typically an array has a spare drive or two for this contingency, or else the failed drive has to be replaced first. The next step involves copying data from good drives to the failed drive. This is easy enough with mirroring, but there is the risk of having a defect in the billions of blocks on the good drive that causes an unrecoverable data loss. Parity recovery takes much longer to do, since all the data on all the drives in the set has to be read to allow generation of the missing blocks. The loss of another drive, or a bad block on any drive, will cause data loss. In response to potential data loss risks, the industry created a dual-parity approach, where two non-overlapping parities are created for drive set. This increases capacity usage to two sevenths or thereabouts in typical arrays.

RAID 6, the dual-parity approach, took a major hit with the release of 4TB and larger drives.Īgain, recovery involves reading one of the parities and all of the remaining data, and can take a long while. Rebuild is measured in days, and the risk of another drive failing, which could result in data loss through bad blocks, is on the threshold of unacceptable. This put the industry at a fork in the road.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed